In my previous post on deep learning, I posted about some of the potential fallout that could occur due to the misunderstanding of what deep learning is really learning. I mentioned some possible significant consequences:

- Wasted resources as venture capitalists throw money at anything that has to do with deep learning.

- Wasted resources as non-expert government agencies fund any research project that has the term “deep learning” in it.

- A boat load of computer science graduates around the world that, all of a sudden, have found their “passion” in deep learning.

- Disappointed companies as deep learning does not have the expected impact on their bottom line.

- Another AI winter.

Let’s look at the last bullet point. Rodney Brooks, former MIT professor and co-founder of iRobot and Rethink Robotics, predicts that we will enter a new AI winter in 2020.

AI winter is a period of reduced funding and interest in artificial intelligence research that comes at the tail end of an AI hype cycle. Each AI hype cycle begins with some major breakthrough. Then for the next 5-10 years after that breakthrough, all sorts of papers get written on AI, companies that are doing “X + [insert some new hot, AI technology]” get funded, computer science students around the world change their career paths, and the media goes into a feeding frenzy about how the new breakthrough will change the world.

Executives at big companies around the world then shout out quotations like this: “AI is more profound than … electricity or fire” – Sundar Pichai (CEO of Google).

Experts in AI then chime in, “This time is different!”

When you hear comments like this, ask yourself “what are they selling?”.

Deep learning is a tool like any other tool…like a wrench for a car mechanic or a serrated knife for a master chef. Deep learning can currently solve specific problems really well but others not so well.

Machine learning, the field that encompasses deep learning, is about automating the process of finding relationships based on empirical data. It is a powerful tool that has an enormous amount of potential, but it is not a panacea and is still a long way away from replacing the human brain.

I do agree with Mr. Pichai that when we have true artificial general intelligence that such a breakthrough would be as profound as electricity or fire. We are not there yet. Much more work needs to be done (and that is a great thing for us scientists and engineers). The future is bright.

How Can We Help Others Gain a Better Understanding of What These Models Are Learning?

The example that is often marketed to explain deep learning is a neural network that first takes the inputs and learns lines, curves, and other shapes. Each successive layer abstracts and combines the data more and more until we see letters and fully formed images. This method of explaining deep learning seems like a good example of how we might gain an understanding of what exactly these algorithms actually learn.

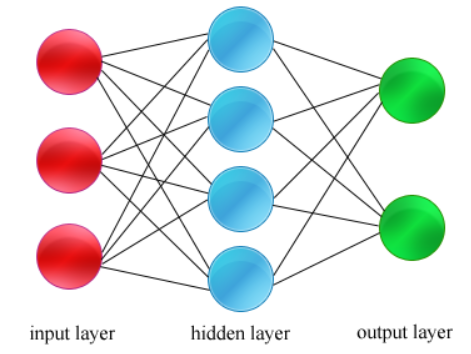

I think that one reason why people might not trust deep learning is because they don’t understand how it works and even when they do, we cannot see the hidden layers in the neural network. When we look at the neural network for deep learning, we have multiple layers including hidden layers that are compose some of the neurons.

With deep learning, we allow the program to learn and distinguish the key features from our sample data set. The problem is that we may not be easily able to understand the features that are distinguished and how they might be related to each other as defined by the hidden layers in the algorithm’s learning model.

I think that one strategy for improving, or at least understanding what deep learning is doing is to unpack these abstracted layers in order to hand tune the results into something that is more relevant.